-

Posts

4,088 -

Joined

-

Last visited

-

Days Won

88

Everything posted by horst

-

Definetly can confirm that with the PageTree and the rare cases where additionally a ListerPro page is needed. ?

-

Maybe this is the better forum for it. Crosslink:

-

- 1

-

-

Issues Repository: If A Reported Issue Effects You...

horst replied to netcarver's topic in Wishlist & Roadmap

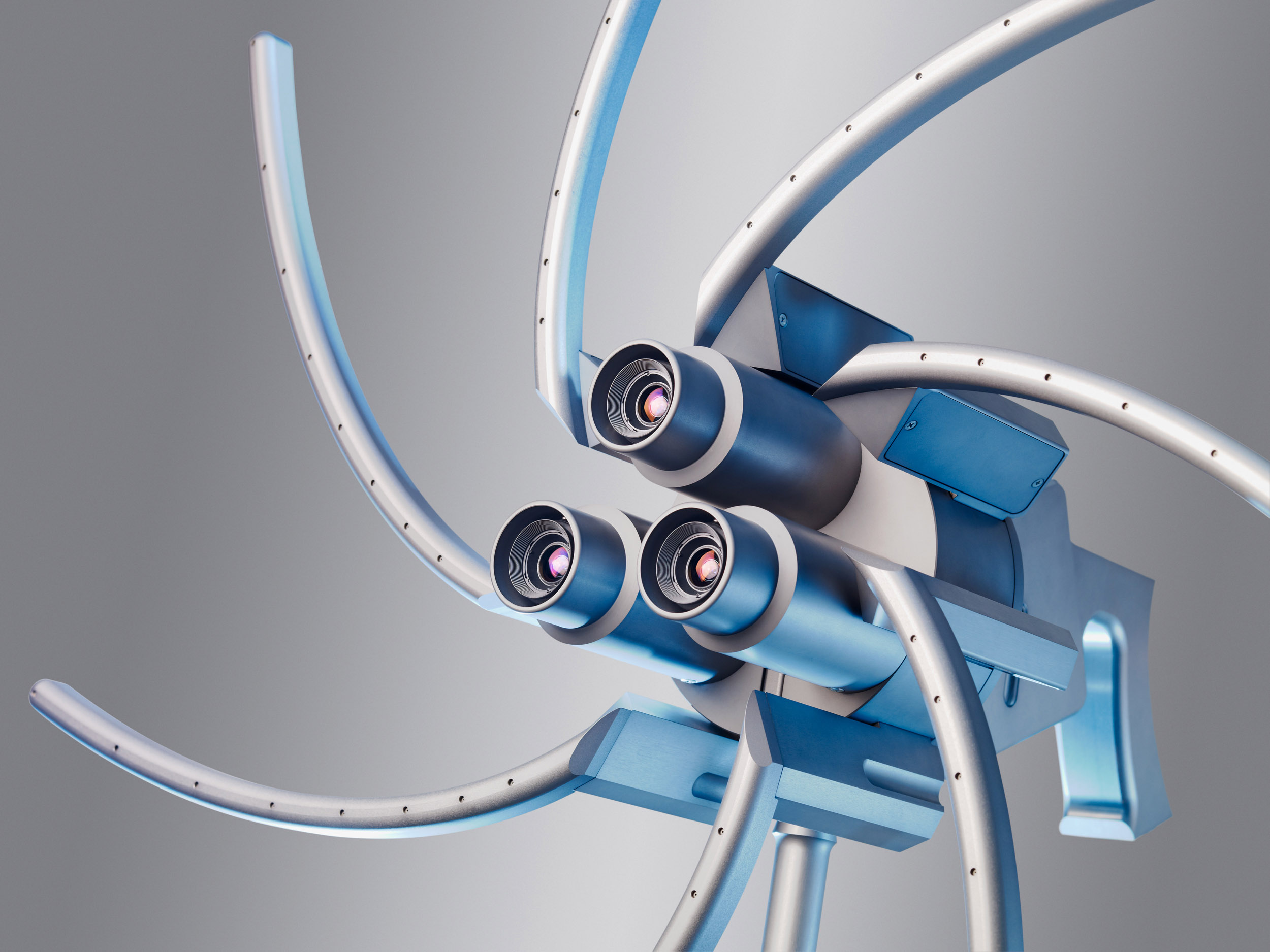

A bit OT, but related to Githubs notifications and issue repos in general. Today I found a nice app, that shows all new GitHub Notifications in a nice way. All previously read comments in a thread are minimized. I found it together with other tools here: https://dev.to/maestromac/tools-i-use-to-stay-on-top-of-githubs-notifications-56mo Screenshot: -

Very nice site and well done write up with good insights! ? Also nice to see, that someone other, besides me, is using Admin Links In Frontend these days. ?

- 4 replies

-

- 1

-

-

- showcase

- consulting

-

(and 1 more)

Tagged with:

-

https://duckduckgo.com/?q=site%3Aprocesswire.com%2Ftalk+server+locale+is+undefined&t=ffsb&ia=web

- 1 reply

-

- 3

-

-

Because there are many languages that uses those chars. Means: these are not only "german umlauts", this is used in multiple languages. And those languages all reduce the chars to a single char, (ä -> a, ö -> o, ü -> u). So, the usage in german language, changing it to a char with a trailing e, is in the minority here. ?

-

[solved] which version numbers do you use? integer or string?

horst replied to bernhard's topic in Module/Plugin Development

Why not? Does version_compare() not work for you? Or extracting major and minor parts separately? Or if you only need to have consistency for modules under your control, why not define major/minor versions explicitly? -

Checking page (all fields) against json feed

horst replied to louisstephens's topic in API & Templates

Using the uncache has nothing to do with the function calls. Uncaching is usefull in loops, at least with lots of pages, to free up memory. Every page you create or update or check values of, is loaded into memory and allocate some space. Without actively freeing it, your available RAM gets smaller and smaller with each loop iteration. Therefor it is good practice to release not further needed objects, also with not that large amount of iterations. -

Checking page (all fields) against json feed

horst replied to louisstephens's topic in API & Templates

Two or three things come to my mind directly: If there is no unique ID within the feed, you have to create one from the feed data per item and save it into an uneditable or hidden field of your pages. Additionally, you may concatenate all fieldvalues (strings and numbers) on the fly and generate a crc32 checksum or something that like of it and save this into a hidden field (or at least uneditable) with every new created or updated page. Then, when running a new importing loop, you extract or create the ID and create a crc32 checksum from the feed item on the fly. Query if a page with that feed-ID is allready in the sytem; if not create a new page and move on to the next item; if yes, compare the checksums. If they match, move on t the next item, if not, update the page with the new data. Code example: $http = new WireHttp(); // Get the contents of a URL $response = $http->get("feed_url"); if($response !== false) { $decodedFeed = json_decode($response); foreach($decodedFeed as $feed) { // create or fetch the unique id for the current feed $feedID = $feed->unique_id; // create a checksum $crc32 = crc32($feed->title . $feed->body . $feed->status); $u = $pages->get("template=basic-page, parent=/development/, feed_id={$feedID}"); if(0 == $u->id) { // no page with that id in the system $u = createNewPageFromFeed($feed, $feedID, $crc32); $pages->uncache($u); continue; } // page already exists, compare checksums if($crc32 == $u->crc32) { $pages->uncache($u); continue; // nothing changed } // changed values, we update the page $u = updatePageFromFeed($u, $feed, $crc32); $pages->uncache($u); } } else { echo "HTTP request failed: " . $http->getError(); } function createNewPageFromFeed($feed, $feedID, $crc32) { $u = new Page(); $u->setOutputFormatting(false); $u->template = wire('templates')->get("basic-page"); $u->parent = wire('pages')->get("/development/"); $u->name = $feed->title = $feed->id; $u->title = $feed->title; $u->status = $feed->status $u->body = $feed->title; $u->crc32 = $crc32; $u->feed_id = $feedID; $u->save(); return $u; } function updatePageFromFeed($u, $feed, $crc32) { $u->of(false); $u->title = $feed->title; $u->status = $feed->status $u->body = $feed->title; $u->crc32 = $crc32; $u->save(); return $u; } -

I don't think it can have to do with MySQL in this case. Never have heard of this. But often heard about it in regard with image resizing. Would it be possible to temporary allow me to inspect this on the server, maybe if it is a staging account?

- 28 replies

-

??? If they want to, - why not? Sure, I would tell them not to use such big images where not necessary. But if they don't care, it is not my time waiting during the boring long uploads. ? And if they are not able to scale down the images before uploading, it seems better to me to let them upload the hires instead of something scrumbled because they don't know what they do.

-

In case of images you can let them upload what they want, but ensure that it is in highest possible quality. For the output you define width x height, quality and even output format (jpeg), if they use png were not appropriate. ?

-

You can check the real available memory on the frontend: echo "<p>" . ini_get("memory_limit") . "</p>"; Maybe, the settings from the adminpanel are not passing through? No, the images are not to big. with more than 128M, for me they work everytime. And if the process would took to long, you would get a timeout error, but not one for to low memory.

- 28 replies

-

I have PW 3.0.109, PHP 7.1.19-nmm1 and a older DB-Backup-Moduleversion. Everything is working fine. Which version of DB-Backup module do you use?

-

Hi @j00st, I have checked your images. They are alright, nothing suspicious. When I set the memory_limit to under 96M, it wasn't possible to resize a single image. WIth set to 96M, it was most of the time, but sometimes not, when resizing multiple images in one script run. You have set it to 128M on your host. If you are able, increase it to 256M. Animated images gets extracted slide for slide into memory. Each slide is processed in a single conversion process and consumes three times the memory of the single slide. Also the process includes a flushing and releasing of no further needed objects and memory, this is known to be buggy (or unclean) in PHP. So, the issue on your host definetly is assigned to low allowed memory usage for (php) image processing. If you set debug to true, every resize action is logged into "image-sizer" log under admin->setup->logs. And you definetly need to use "forceNew" in your resize calls when debugging and testing!

- 28 replies

-

- 1

-

-

Internal Server Error 500 - Strato Shared Hosting

horst replied to AndreasWeinzierl's topic in Getting Started

additional to what is already mentioned above, it could be write access to the assets folders and files. Often when ftp-ing a complete site profile, I have to manually correct the access rights for the assets folders to include write access for php or wwwrun or how ever this is called by the different hosters. -

Maybe, every PHP tutorial that you are able to understand and isn't boring, - plus reading small existing PW-modules, - and a idea what you want to build. ? https://processwire.com/docs/tutorials/ https://webdesign.tutsplus.com/tutorials/a-beginners-introduction-to-writing-modules-in-processwire--cms-26862

-

I'm very happy with PhpEd and a lot of its features. Also I possibly don't use 50% percent of them. ?

-

?? - can you PM me that image? Is it with all animated images or only with special ones? DO you have set the option to force a recreation of the variations? ("forceNew" => true) otherwise you always get the previously cached version. <?php $test = $img->url; $test2 = $img->width(1440, array("forceNew" => true))->url;

- 28 replies

-

Everything looks good. Please can you try the following code snippets in a template file: $imgPath = $config->paths->files . '1/animated.gif'; // add the full path to an original animated gif here!! $ii = new ImageInspector(); $info = $ii->inspect($imgPath, true); var_dump($info); $is = new ImageSizer($imgPath); var_dump($is->getEngines()); $shortInfo = $is->getImageInfo(); var_dump($shortInfo); and check the output? The first var_dump() should have a true under "info"->"animated", and the second one only should have one array item named "ImageSizerEngineAnimatedGif". If this is the case, we need to check further.

- 28 replies

-

- 2

-

-

-

? Many thanks! ? That is very helpful.

-

Hhm, did you have installed / enabled the animated gif module? Which version is it? Which number in the internal modules hirarchy does it have? And which other modules and hirarchy numbers are installed? The animated gif module doesn't rely on IMagick.

- 28 replies

-

Actually over five years old, but may contain some useful snippets:

-

You should ask him if there is a setting that limits urlsegments, if you are not able to check it by yourself. ?

-

I believe it is a difference in http server, nothing to do with php. Can you compare the settings or have you asked your hoster?