mtwebit

Members-

Posts

23 -

Joined

-

Last visited

mtwebit's Achievements

Jr. Member (3/6)

74

Reputation

-

The global config is used to delete or export existing data. E.g.: pages: template: Family export: fields: [ family_id, previous_name, family_name, family_name_variants, source_text, bibliography_ref: title, notes ] delimiter: ';' header: 1 The Dataset > Purge link below the global config can be used to clean up the dataset (pages created during import). Unfortunately, I did not use page save hooks in datasets during my projects. You may check the source code here.

-

I'm trying to store values in a FieldtypeTextUnique field using the API. Unfortunately, the following code reports no error / exception when a value is not stored because it is not unique in the DB. try { if (!$page->save($fieldname)) { ... report the error ... } } catch (\Exception $e) { .. report the exception ... } I was expecting at least an exception because of the UNIQUE SQL constraint. Any idea how to catch this error in a module that modifies page fields? I also tried the validate the saved value but things got complicated for certain field types (e.g. datetime, WireArrays). Thanks!

-

I guess you forgot to set the working directory in your crontab. You should invoke Tasker using cron this way: */2 * * * * docker exec --user nginx phpweb bash -c "cd /var/www/html/site/modules/Tasker && LD_PRELOAD=/usr/lib/preloadable_libiconv.so /usr/bin/php runByCron.php" You can skip the docker and LD_PRELOAD parts but you need to set the working directory (cd ......./Tasker). I don't really understand the other problem, sorry. Do you have trouble with the createTask() method called from your own module?

-

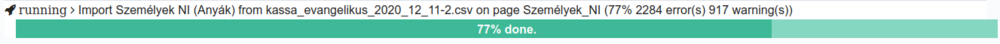

I forgot to mention it here, but it was fixed in November, last year. I completely rewrote this part of the code. There's no redirect anymore. The module will display an embedded progress bar if you run a task via the Web browser:

-

Actually, the log_messages field is created and used by Tasker not DataSet. I don't see any easy solution to handle this problem. If PW core gets noindex support then I'll use that. The best thing you can do atm is to turn off profiling and debugging in Tasker in a production system (this is independent from PW settings). It is also a good practice to delete tasks after they are finished and create new ones when needed.

-

Although LazyCron and web-based task execution are supported, tasks should be invoked by command line tools (e.g. Unix cron), not from the the Web server environment. See the wiki. Tasker uses process control functions for checking signals (e.g. an execution timer) which should be safe to use in a webserver environment but the code also monitors the SIGINT and SIGTERM interrupts that should be moved to the command line specific part. And it seems I need to check the availability of that function. Thanks for pointing this out.

-

I haven't used the DataSet XML import for a while. Let me know if it needs some polishing.

-

Thanks for reporting this. Probably a core compatibility issue. Fixed now. Pull the updated module from github or delete from your site/modules and reinstall it.

-

Thanks for the feedback! I'm glad to hear that they are useful ? although a bit complex to use. Tasker has a few small improvements, I think I pushed the latest version to the GitHub repo. DataSet changed a bit more, and some modified parts still need review and testing. Thanks for reminding me to finish them. My DataSet project is still running. We have like 150k+ (mostly complex) data pages interconnected with many references and getting to hit the wall with MySQL during imports and complex page reference lookups.

-

I use page references heavily in my projects. Page Autocomplete has a field (Settings specific to ...) on the Input tab of the field settings page that can be used to specify what fields are used during the query. You can even select multiple fields, e.g. a category_ref_by_id field can specify multiple ID fields. This way you can merge individual data sets into a single one. Each source set can have its own ID, and the ...ref_by_id field can use all of them. I have no plans for the automatic creation of the missing referenced page but it can be achieved very easily. Just create another DataSet using the same CSV file and import the appropriate "category" columns for creating the missing pages. You can also try to use the location attribute in the DataSet config to make a reference to the file uploaded to the original DataSet (see the wiki) to avoid duplicate uploads. If you need to perform these imports automatically you can create two tasks (category import and the original one) and specify a dependency between them (first import categories then the full data set). See Tasker wiki.

-

OK. It was time to update the wiki ? I've uploaded a new DataSet version (0.9.5) to GitHub. It contains many improvements for data type conversions, page reference handling and several bug fixes. It also has a new profiler to optimize the import routines. Tasker is also updated.

-

I've uploaded a new version (0.9.5) to GitHub. It tries to handle DB connection loss errors and it has a basic profiler to optimize your import routines. See the wiki for more details. Note: TaskerAdmin needs some fixes in its JS-based task executor. Don't use this feature atm. (Cron is always the preferred task execution method.)

-

By default DataSet will create a new PW page each time it imports a row. In the above example, two pages will be created with title "Orange" and one with "Banana". There is no option to change the title for the new page (2nd Orange) if it matches an already existing one (1st Orange). You can, however, combine several fields in the title making it unique. E.g. you can create the title like this (column #0 always contains the row's serial number): title: [1, ' (', 0, ')'] The result will be: Orange (1) Orange (2) Banana (3) You can also update (overwrite or merge) already existing pages. In the "pages" section of DS config you can specify a selector and add the overwrite or merge option. See the wiki for more details. (Which needs to be updated but it is probably still helpful ? )

-

I've checked the above config on my DataSet test site and it is valid. (Don't forget to save the page to run the validator again.)

-

Thanks for the hint. I've implemented the prefix-based workaround.